The Samsung Galaxy S21 FE has a problem – its price. Despite this budget alternative to the Galaxy S21 launching for a lower price than its 11-month-old sibling did, due to the devaluation of tech over time, the older and more powerful handset is now the cheaper one.

As a result, after debuting at CES 2022 , tech journalists' responses to the Galaxy S21 FE have been lukewarm; in our review, we said "at its launch price, it’s hard to recommend." And our sister site Tom's Guide said, "Samsung should have just canned this one and saved all the fanfare for the Galaxy S22."

It's easy, then, to discount this afterthought of a smartphone, but there's actually a way Samsung could save it – and it all comes down to the Galaxy S22 , which we're expecting to launch soon.

A new S22

We were rather impressed by the Samsung Galaxy S21's price when it launched, as its $799 / £769 / AU$1,249 cost was representative of a larger price decrease for the family – the S20 mobiles cost quite a bit more.

That's part of the Galaxy S21 FE's problem. Its siblings were cheap enough that, at its price, it doesn't fit as a lower-cost alternative.

But Samsung could fix all that. Depending on how it prices the Galaxy S22 phones, the S21 FE could serve better as a budget companion to the new line, instead of the S21 family.

The timing works out – it's basically launching alongside them, give or take a couple of weeks.

The specs and features difference likely isn't much of a problem either. Samsung phones usually have just a small update each year, and we're not expecting the S22 devices to be hugely different from their S21 predecessors. So the S21 FE isn't going to feel like much more of a downgrade to the S22 models than its price would cause.

In addition, the S21 FE also runs Android 12 , unlike the S21 family which came with Android 11 (though they can be upgraded now). So in terms of software, the S21 FE is already closer to the S22 than the S21 devices.

A climbing price

If the Samsung Galaxy S21 FE was positioned as the S22's cheaper sibling, there would be an obvious and possibly problematic implication: such a move would only work if the Galaxy S22 series was a little more expensive. We could see a return to the S20's start price of $999 / £899 / AU$1,499, or in that ballpark at least.

That high price would make the Galaxy S22 a little harder to afford for most average buyers, especially the Plus and Ultra models which would end up even costlier.

That's not just a problem for buyers, but for Samsung too, as the phones would be less tempting compared to alternatives from rivals like the Xiaomi 12 , OnePlus 10 , Oppo Find X4 and Realme GT 2 , and later also the iPhone 14 .

The premium-price smartphone tier is a competitive one, with lots of high-powered phones releasing for similar prices, and Samsung would be at a big disadvantage if it increased the price of its mobiles substantially.

If Samsung made the S22 start price higher than the S21's it might make the S21 FE seem like a more tempting option, but at a cost to the sales and appeal of the S22 models. There's no perfect answer, but we'll likely find out what the company's choice is soon, with the new Samsung Galaxy phones expected to launch near the end of January.

Dodgy Wordle copycats are already banned from Apple's App Store, but there's more work to do

It’s an age-old adage that if something’s successful, there’s a good chance it’ll be copied. And that’s exactly what’s happened with Josh Wardle’s Wordle game, with copies swiftly appearing on Apple’s App Store.

Some developers were trying their luck in charging subscription fees for as high as $30 a year, which would grant you more words and no ads.

But overnight, after a heavy backlash against a copycat app that mirrored Wardle’s game in name and design exactly, Apple looks to have taken all of them down in one fell swoop. We’ve reached out to Apple for confirmation that it was the App Store team who did this.

Wardle has yet to comment on this, but as he has maintained that he’s not planning on monetizing Wordle, there could still be an opportunity for him to expand the game, offering different word counts or leaderboards with friends for example, but in an official capacity.

Analysis: What about the other copycats?

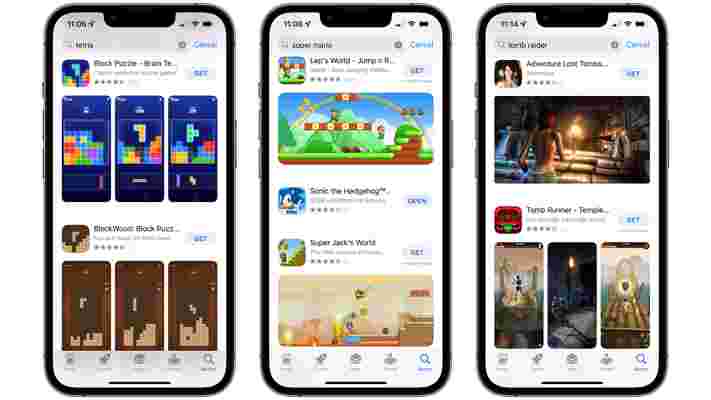

It’s no secret that the App Store has been here before with copycats - Flappy Bird and Temple Run come to mind as having been shamelessly ripped off in the past.

But this is notable because swift action was taken in the space of an evening. Whether or not it may be because Wordle is a web app, rather than one that can be downloaded from a Store, is up for discussion, as other similar apps that mirror official brands can still be downloaded from the App Store with no penalty.

However, if you search for a popular game or app in the App Store, there’s a good chance you’ll come across another copycat. Searching for Flappy Bird or Tomb Raider comes up with a list of apps that have nothing to do with the original developer, with some even showcasing screenshots of the original app.

Granted, inspiration can come from anywhere. Steve Jobs would repeat the quote in 1996 of ‘Good artists copy, great artists steal’ from Pablo Picasso to reference Apple’s work on the Macintosh from 1984. But Jobs was also enraged by how he was convinced that Android had blatantly copied iOS in 2008.

But when you take the name of the same app you’re taking inspiration from, taking the same design cues from the app, then tacking on a chargeable fee when the original game is free and open to all, then it’s a major problem.

Apple has a mammoth task in weeding out other copycat apps. Granted, its efforts to improve standards for developers on the App Store, either through reducing approval times or reducing the company’s cut of in-app earnings are encouraging. But removing thousands of apps that blatantly steal from others is going to be something that developers will be watching closely for after the last 24 hours of Wordle copycats disappearing from the App Store.

Via The Verge

Why website uptime and speed tests aren't as important as you think

Everyone has their own idea of what makes a great web hosting service . Loads of features, powerful servers, or maybe great support, low prices, or maybe some combination of these and other functionality important to the individual.

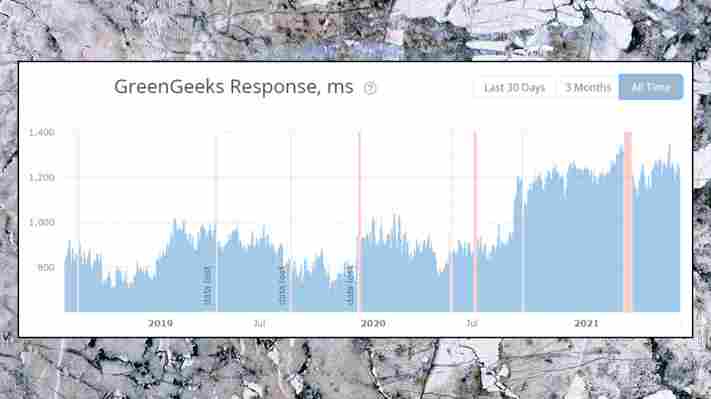

Some specialist web hosting review websites also place a high weight on their own web hosting benchmarks, though, especially speed and host uptime (the proportion of time a website is available online). That seems like the logical thing to do but does it actually make sense?

There's nothing wrong with running these tests – indeed we've done them ourselves – and the results can feel like valuable information. No-one wants a slow or unreliable website, and anything which highlights a good host, or warns you about a poor one, has to be welcome.

However, you must be careful how you interpret these figures. Although the high-precision results make them look objective, they're often based on a number of very subjective judgement, and that can significantly affect their reliability. In this article we'll look at some of the issues you need to think about in this respect.

Note that our comments apply to web hosting but also, ahem to website builders as well.

Understanding the tests

Measuring web host speed and uptime is complicated, with many factors involved. Which servers are checked? Which sites? How often? Is the speed figure a server response time, the 'time to first byte' (the time between requesting site content), the time to load a sample site, or something else?

It’s like browsing a chart comparing the 20 top electric cars. The rankings might change radically depending on the test driving style, environment, traffic conditions, weather, temperature and more, and until you understand the details, there’s no way to tell how relevant they might be to you.

When a hosting review presents you with an uptime or speed figure, don't take it at face value. Read the review in full and look for any explanation of how it's calculated. If you don't see anything, look for a 'How we test' site-level article with more details.

Just reading the explanation of what's going on can tell you a lot. Is it clear what's going on, exactly what's being checked, how the tests are run? Do you feel there's enough information that you could carry out the same tests yourself?

If it's all a little (or extremely) vague, or there's no explanation at all, that alone makes the figures almost meaningless as you've no idea what's being checked. Most review sites do have a decent explanation of what they're doing, fortunately, but that can also raise many more issues.

Speed tests

The first question to consider with any web host speed test is what, exactly, is being tested?

We typically benchmark a shared hosting plan, and almost all web hosting review sites do the same. That's a reasonable starting point, but it doesn't indicate what sort of performance you'll see from a host's managed WordPress plans, VPS or dedicated hosting .

Even if you're shopping for shared hosting, there are complications. For example, many hosts have multiple levels of shared hosting, where each plan gets a different level of system resources (CPU, RAM and so on). The test designer must decide which level of shared hosting to include in the benchmarks.

The simplest option is to pick the cheapest shared hosting product in any range – but the trouble is, that penalizes providers who offer basic consumer hosting. Provider X may have some of the best high-end hosting products around, for instance, but because it also has a very basic $1 a month starter plan (which should be a plus), it's likely to drop down the speed test ranks.

A fairer approach is to choose equivalent hosting packages from each provider, so the test compares similar products. But does 'equivalent' mean a similar price, or features, or some mix of the two?

There's a lot of subjective judgement involved in figuring that out. And even if the tester somehow comes up with the perfect choice of comparable products, that might all change the very next day if the host updates its feature list or prices.

This doesn't mean the results have no value at all. If host X tops the current list for baseline shared hosting speeds, and host Y trails behind in a distant last place, then that's useful information. And it’s certainly better than having no information at all. Just keep in mind that it might not accurately represent the speeds you'll see with your preferred product.

What is 'speed' anyway?

The next benchmarking issue to consider is how the test measures speed. There are two common methods.

The first checks server response time, or how long the server takes to respond to a request. That's a simple statistic and easy to compare, but it's mostly about network speed, and doesn't cover a long list of relevant factors. If your server is short on CPU power, or RAM, or has slow storage devices, for instance, that's not going to be properly reflected in a response time, as it's not loading a full website page.

The second option measures the load time for a test site, perhaps a simple WordPress template. That's an improvement, as it takes account of more performance factors, but it's still most unlikely to reflect your situation. Your own site is probably very different to the test template, maybe runs on a different CMS, with your own custom plugins, and none of that will be reflected in the test results.

Template-based speed tests are almost always based on a web host's default setup, too. Does the host automatically enable Cloudflare or some other CDN, say? Is it using the latest and fastest version of PHP, and the most speed-optimized PHP settings?

Doing it this way has some value for first-timers who'll accept the default settings and never change anything, but it's not much use for anyone else. If you might integrate a CDN yourself, change your PHP version, or make a single speed tweak, ever, anywhere in your hosting control panel, that could be enough to radically change your host's test speed ranking.

Location, location, location...

The extra complication with any speed test is figuring out the locations involved. Where in the world is the test site, and where are the servers running the tests? It can make a huge difference.

Many web hosts have several data centers, for instance, and it's most unlikely that they'll offer the same performance. If the test site is in Los Angeles, but you'll choose New Jersey, or London, or Brisbane, or somewhere else, that's likely to have a significant effect on the results. (Most of the review sites we checked didn't even mention the issue, so you're probably not going to find out.)

The best speed tests are typically carried out by an automated service which runs simultaneous performance checks from multiple locations. For example, Bitcatcha runs tests from the US (east and west coasts), London, Singapore, Sao Paolo, Mumbai, Sydney, Japan, Canada and Germany, and gives you a rating based on the overall results.

The advantage of this approach is it allows you to compare web hosts anywhere for their worldwide performance. The problem is that if your site doesn't have a worldwide audience – most visitors are from your home country, maybe – then the figures you need are for their locations only, and that could give you a very different rating.

As we've said above, this doesn't mean the figures have no value. Any test results are welcome. But don't take any single speed rating as a cast-iron guaranteed measure of a host's overall performance – it could take some thought to figure out what the data actually means for you.

What is 'uptime' anyway?

At first glance, measuring website uptime seems relatively easy. It's just the amount of time your site is up and running, expressed as a percentage. If a web host gives you 99.9% uptime annually, for instance, that translates to 8 hours 46 minutes of downtime over the year. Simple.

Except, well, it really isn't. Web hosts often define 'uptime' as meaning your server is accessible, not your site. For example, HostGator's Uptime Guarantee page says: “Just because your website does not work, this does not mean your server has downtime. As long as the server is available to deliver your content, then the guarantee is met.”

Many review and testing sites also focus largely on server availability. For instance, UptimeRobot says it detects downtime by sending HTTP requests to a website, and looking for HTTP status error responses, or no response at all.

The problem is there are many situations where the server might be up and running, but a website is close to unusable. Just think of all the times you've seen this. You visit a site but see strange error messages, maybe some features don't work at all, or speed is so poor you give up and go somewhere else.

Issues like this are arguably the worst you can get. If your site is inaccessible, people might wonder if it's some ISP or network issue. If they can reach your site, but it doesn't work, they're far more likely to blame you. And yet, if your server is available and can return a page – even if it says 'sorry, we've got problems, come back later' – it's possible that none of this will be reflected in the uptime figures.

More uptime complications

There are plenty of other potential uptime testing complications. As with the speed tests, for instance, it's important to understand which servers are included in the benchmarks. If they're covering a specific product only (the cheapest shared hosting), they won't necessarily tell you anything at all about the rest of the range.

Uptime checks can sometimes falsely report a site is down, too. This happens often enough that Uptimeom has a FAQ page on the topic, where it lists quite a few potential causes: 'Most likely candidates include local issues, firewalls, blacklists, timeouts, and load balancer issues.'

These problems may not be common, but they're still something to consider. The difference between uptimes of 99.99% and 99.98% is only around 18 minutes a year, for instance – if the test checks a site every minute, that represents only 18 misleading fails out of 525,600 tests over a year, or one error for every 29,200 attempts.

None of these issues mean you should ignore uptime and speed results entirely. Even if they're covering basic shared hosting and you're after a VPS, say, it's interesting to see if a provider is racing ahead of the competition, or lagging far behind.

But don't assume the figures give you a complete and accurate picture, either. They'll give you a general idea of how the web host performs in some areas, but those may not be the areas most relevant to you, and they certainly don't give you the full performance story.

What TechRadar advises

Always take uptime and speed tests with a handful of salt. Because they are often the only objective-looking numerical tests that web hosting review sites can perform, they tend to be put in the limelight and placed firmly on a very high pedestal. In our opinion though, they should only be considered as secondary, minor, parameters when choosing which web hosting company to go for and that is reflected in our review process.